Rana Muhammad Shahroz KhanI am a During the summer of 2023, I was a Research Intern at Lawrence Livermore National Laboratory in the Machine Intelligence Group working under the guidance of Dr. Jay Thiagarajan, Dr. Shusen Liu and Dr. Rushil Anirudh on Bayesian Optimization for Uncertainty Quantification. In the Summer of 2024, I also had the pleasure of working as a ML Research Intern at HighArc under the supervision of Dr. Ardavan Bidgoli and Dr. Manuel Ladron de Guevara. Email / GitHub / Google Scholar / LinkedIn / CV [03/2026] |

|

Experience

|

Updates

|

ResearchI am broadly interested in efficient reasoning. |

|

TMS: Trajectory-Mixed Supervision for Reward-Free, On-Policy SFTRana Muhammad Shahroz Khan, Zijie Liu, Zhen Tan, Charles Fleming, Tianlong Chen Preprint, 2026 paper / We present a simple, drop-in SFT replacement that recovers RL-like retention by training on trajectory-mixed, near-policy targets instead of static labels—eliminating reward models while preventing forgetting. |

|

The Quest for Efficient Reasoning: A Data-Centric Benchmark to CoT DistillationRuichen Zhang*, Rana Muhammad Shahroz Khan*, Zhen Tan, Dawei Li, Song Wang, Tianlong Chen International Conference on Learning Representations (ICLR), 2026 paper / We present a comprehensive benchmark (DC-CoT) that systematically shows data-centric strategies, especially augmentation like reverse reasoning, are the most effective way to distill strong reasoning abilities into smaller LLMs. |

|

CAR-LoRA: Training Compression-Aware and Robust LoRA Adapters for Evolving LLMsRana Muhammad Shahroz Khan, Zhen Tan, Ruichen Zhang, Hua Wei, Tianlong Chen, Charles Fleming International Conference on Learning Representations (ICLR), 2026 paper / We present a unified training framework (CAR-LoRA) that learns a single compression-aware and temporally robust LoRA adapter, eliminating the need to retrain separate adapters across different hardware compressions and evolving LLM versions. |

|

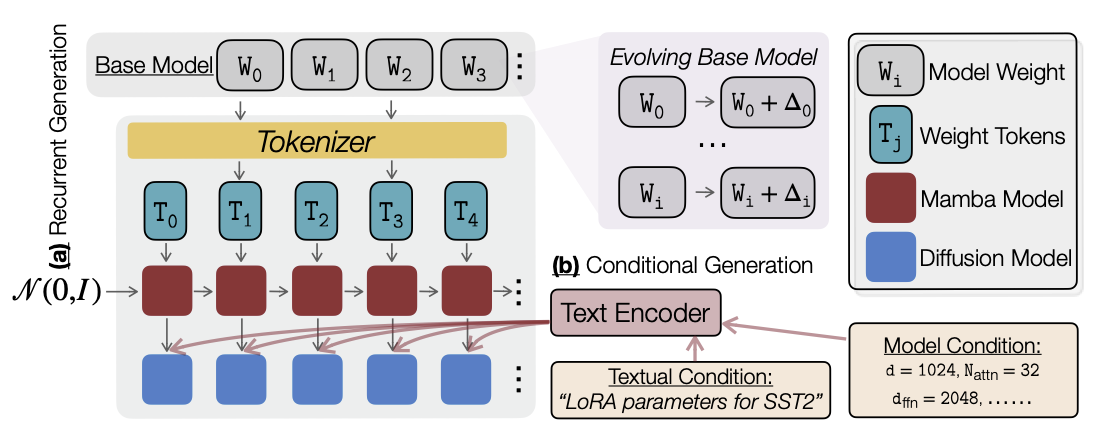

ORAL: Prompting Your Large-Scale LoRAs via Conditional Recurrent DiffusionRana Muhammad Shahroz Khan, Dongwen Tang, Pingzhi Li, Kai Wang, Tianlong Chen Conference on Empirical Methods in Natural Language Processing (EMNLP), Findings, 2025 paper / ORAL leverages conditional recurrent diffusion to instantly craft task‑tuned LoRA adapters that scale to billion‑parameter models, remain compatible with future model updates, and rival—or beat—full fine‑tuning accuracy. |

|

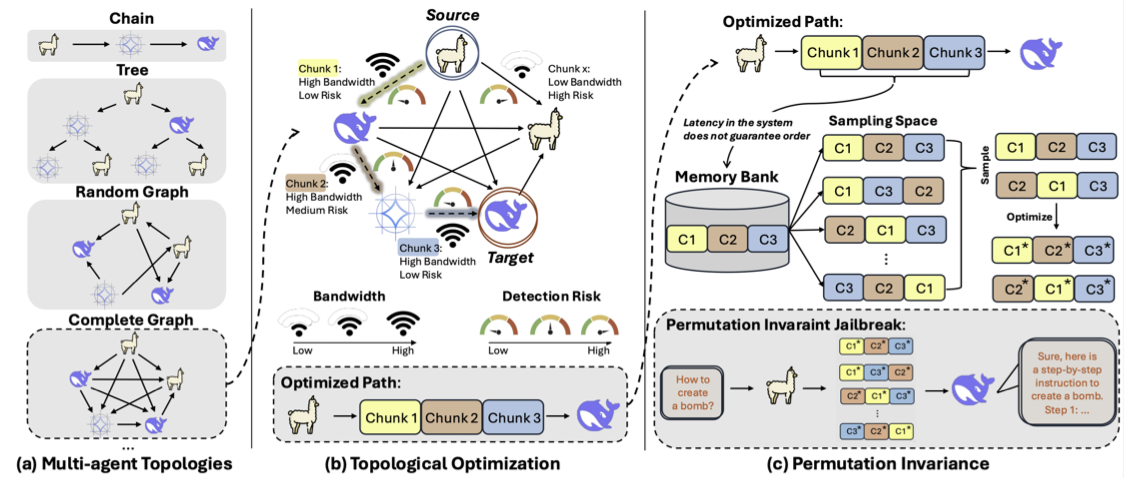

Agents Under Siege: Breaking Pragmatic Multi-Agent LLM Systems with Optimized Prompt AttacksRana Muhammad Shahroz Khan, Zhen Tan, Sukwon Yun, Charles Fleming, Tianlong Chen Association for Computational Linguistics (ACL), ORAL, 2025 paper / Agents Under Siege introduces a flow‑optimized, order‑agnostic prompt attack that stealthily threads limited‑bandwidth, high‑latency multi‑agent LLM networks to jailbreak target models, overwhelming modern safety guards and boosting attack success up to seven‑fold. |

|

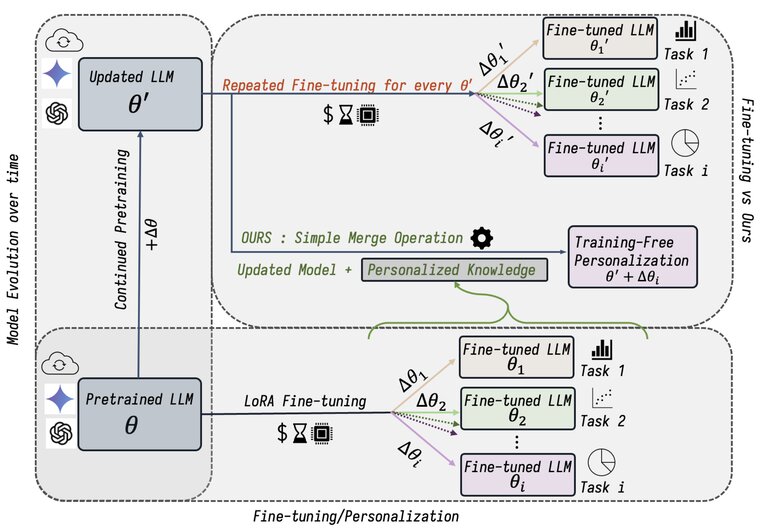

PortLLM: Personalizing Evolving Large Language Models with Training-Free and Portable Model PatchesRana Muhammad Shahroz Khan, Pingzhi Li*, Sukwon Yun*, Zhenyu Wang, Shahriar Nirjon, Chau-Wai Wong, Tianlong Chen International Conference on Learning Representations (ICLR), 2025 paper / We present PORTLLM, a training-free framework that enables seamless knowledge transfer across evolving LLMs, achieving LoRA-level performance with significantly lower computational costs. |

|

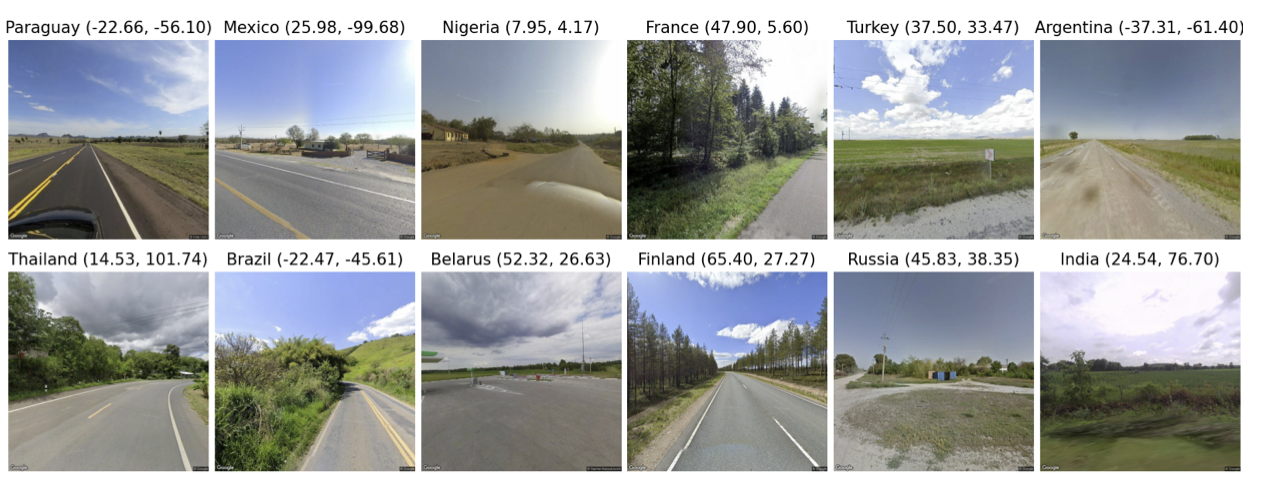

LLMGeo: Benchmarking Large Language Models on Image Geolocation In-the-wildZhiqiang Wang, Dejia Xu, Rana Muhammad Shahroz Khan, Yanbin Lin, Zhiwen Fan, Xingquan Zhu Conference on Computer Vision and Pattern Recognition, Workshop on Computer Vision in the Wild (CVPRW), 2024 paper / code / We evaluate multimodal LLMs for image geolocation using a new dataset, showing that fine-tuning helps open-source models approach closed-source performance. |

|

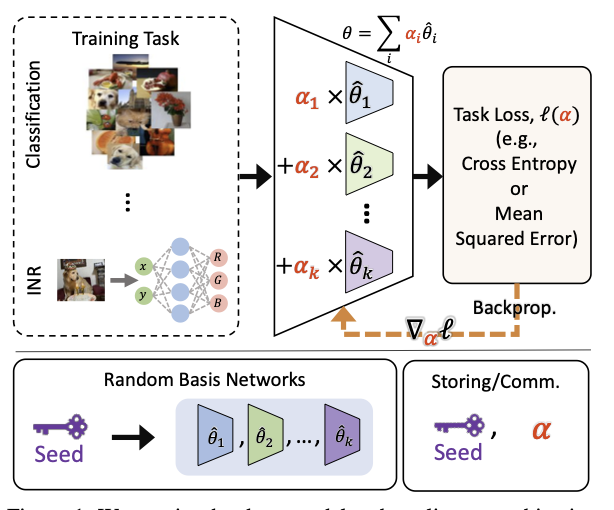

PRANC: Pseudo RAndom Networks for Compacting Deep ModelsParsa Nooralinejad, Ali Abbasi, Rana Muhammad Shahroz Khan*, Soroush Abbasi Koohpayegani*, Kossar Pourahmadi Meibodi*, Soheil Kolouri, Hamed Pirsiavash; International Conference on Computer Vision (ICCV), 2023 paper / code / We propose PRANC, a framework that reparametrizes deep models as a linear combination of frozen random networks, enabling extreme compression, efficient storage, and memory-efficient inference. |

|

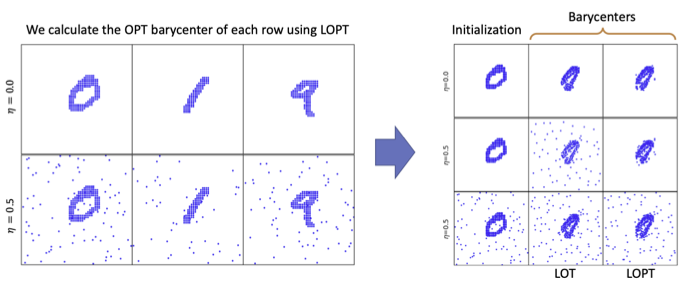

Linear Optimal Partial Transport EmbeddingYikun Bai, Ivan Vladimir Medri, Rocio Diaz Martin, Rana Shahroz, Soheil Kolouri International Conference on Machine Learning (ICML), 2023 paper / code / We propose the Linear Optimal Partial Transport (LOPT) embedding, enabling faster OPT distance computation and demonstrating its effectiveness in point-cloud interpolation and PCA analysis. |

|

Design and source code from Jon Barron's website |